Language Models and the Problem of Surprise

Why AI Can Simulate Abduction Without Experiencing Model Failure

MODEL-CENTRIC INFERENCE

Greetings,

I’m finally into copy editing phase with Augmented Human Intelligence (AHI), and so can turn more to writing about all things AI. Start here:

Why AI Can Simulate Abduction Without Experiencing Model Failure

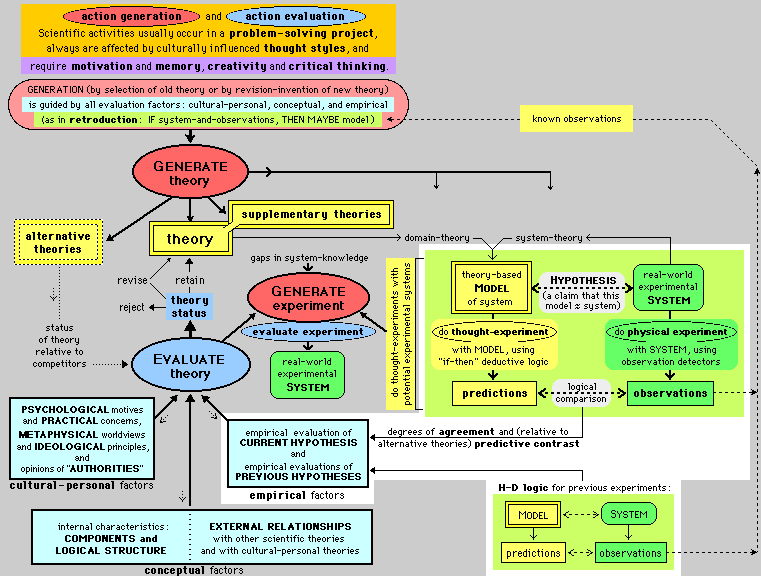

One of the most interesting things about human reasoning is that it occasionally forces us to abandon the very assumptions we started with. Most of the time we reason within a framework: given certain premises, what follows?

But we frequently observe something that doesn’t fit what we expect. Charles Sanders Peirce pointed out over a hundred years ago that much of the world we encounter even day to day doesn’t quite “fit” in one way or the other, and so we resort to a type of inference he called abduction. We abduce when a surprising fact forces us to consider that one of our assumptions may simply be wrong. I used this example in my book: If the streets are wet we might infer that it rained, but if the sky is cloudless and the ground is still soaked we should start looking for another explanation—a broken hydrant, perhaps. What matters in these moments is not just that we produce a new explanation. It is that the surprise forces a shift in the underlying model we were using to make sense of the situation. The world is constantly disappointing us, which is to say, our models based on expectations. No matter; we abduce what might be true given that something surprising has been observed.